onnx2tf

A tool for converting ONNX files to LiteRT/TFLite/TensorFlow, PyTorch native code (nn.Module), TorchScript (.pt), state_dict (.pt), Exported Program (.pt2), and Dynamo ONNX. It also supports direct conversion from LiteRT to PyTorch.

You should use LiteRT Torch rather than onnx2tf. https://github.com/google-ai-edge/litert-torch and https://github.com/google-ai-edge/ai-edge-quantizer

Model Conversion Status

https://github.com/PINTO0309/onnx2tf/wiki/model_status

Supported layers

flatbuffer_direct execution path

Currently, the flatbuffer_direct backend is faster and has a higher success rate than the default tf_converter backend. The simplest conversion command for flatbuffer_direct outputs only a LiteRT model, but if you add --flatbuffer_direct_output_saved_model, it will output a saved_model as before. However, what is different from the previous behavior is that it will build the graph of the saved_model from the LiteRT model.

[!IMPORTANT]

Starting with onnx2tf v2.4.0, tf_converter will be deprecated and the default backend will be switched to flatbuffer_direct. With the v2.3.3 update, all backward compatible conversion options have been migrated to flatbuffer_direct, so I will only be doing minor bug fixes until April. If you provide us with ONNX sample models, I will consider incorporating them into flatbuffer_direct. I’ll incorporate ai-edge-quantizer when I feel like it, but that will probably be about 10 years from now.

When --tflite_backend flatbuffer_direct is selected, onnx2tf now uses a direct fast path for both ONNX input and -it/--input_tflite_file_path input:

- ONNX graph preprocessing (

tflite_builder.preprocess) and direct lowering (lower_onnx_to_ir)

- Direct FlatBuffer export (

*_float32.tflite, *_float16.tflite, and optional quantized variants)

- Optional direct reports/evaluation (

*_op_coverage_report.json, tensor correspondence, ONNX/TFLite check)

In this fast path, the per-node TensorFlow conversion (op.make_node() over all ONNX nodes) is skipped.

This removes the long debug traces such as:

INFO: <index> / <total>INFO: onnx_op_type: ...INFO: tf_op_type: ...

Measured example (same model, float32 TFLite write stage):

tf_converter: ~24.947sflatbuffer_direct: ~0.239sflatbuffer_direct was approximately 107x faster than tf_converter in this case.

Actual speedup depends on model structure, enabled options, and runtime environment.

Direct export can also generate TF-side artifacts without falling back to tf_converter:

--output_h5--output_keras_v3--output_tfv1_pb--flatbuffer_direct_output_pytorch

These artifacts are generated from an internal SavedModel bridge built from float32 ModelIR.

If direct export fails, conversion stops with an explicit error.

-inimc / -onimc also stay on the direct path in flatbuffer_direct.

For ONNX input and -it input, these options crop the imported/lowered ModelIR

at the specified boundary tensor names instead of splitting the ONNX graph.

-dgc, -ebu, and -eru also stay on the direct path in flatbuffer_direct.

For ONNX input they are applied during lowering or as post-lowering ModelIR rewrites.

For -it input they are applied to imported ModelIR before SavedModel bridge,

split planning, or rewritten TFLite export.

If the requested rewrite cannot be applied safely, conversion stops with an explicit error.

-me also stays on the direct path in flatbuffer_direct.

For ONNX MeanVarianceNormalization, direct lowering uses primitive builtin ops

and applies mvn_epsilon to the internal variance + epsilon term without

falling back to tf_converter.

--disable_model_save also stays on the direct path. In flatbuffer_direct, it means the conversion can still run internal validation and temporary staging, but no final artifacts are left in the requested output directory.

Invalid combinations are rejected explicitly:

--disable_model_save with --output_h5, --output_keras_v3, or --output_tfv1_pb--enable_auto_split_model with --output_h5, --output_keras_v3, or --output_tfv1_pb

SavedModel direct export from flatbuffer_direct ModelIR is available with

--flatbuffer_direct_output_saved_model.

PyTorch package direct export is available with

--flatbuffer_direct_output_pytorch.

These options have the following constraints:

--tflite_backend flatbuffer_direct is required for both--flatbuffer_direct_output_saved_model cannot be combined with --disable_model_saveCUSTOM ops are rejected with an explicit error

| INT8 ONNX |

INT8 TFLite(LiteRT) |

|

|

- e.g. LiteRT only output

onnx2tf \

-i iat_llie_180x320.onnx \

-tb flatbuffer_direct

- e.g. Generate additional

saved_model from LiteRT after LiteRT output

onnx2tf \

-i iat_llie_180x320.onnx \

-tb flatbuffer_direct \

-fdosm

- e.g. Generate

saved_model directly from an existing LiteRT (.tflite) file

onnx2tf \

-it iat_llie_180x320_float32.tflite \

-tb flatbuffer_direct

- e.g. Generate

.h5 directly from an existing LiteRT (.tflite) file without tf_converter fallback

onnx2tf \

-it iat_llie_180x320_float32.tflite \

-tb flatbuffer_direct \

-oh5

- e.g. Generate a PyTorch package directly from an existing LiteRT (

.tflite) file

onnx2tf \

-it iat_llie_180x320_float32.tflite \

-o tmp_iat_llie_180x320_from_tflite \

-tb flatbuffer_direct \

-fdopt

- e.g. Compare the input LiteRT model and the generated PyTorch package with the same seeded inputs

onnx2tf \

-it iat_llie_180x320_float32.tflite \

-o tmp_iat_llie_180x320_from_tflite \

-tb flatbuffer_direct \

-fdopt \

-cotof

This outputs:

iat_llie_180x320_float32_pytorch/iat_llie_180x320_float32_pytorch_accuracy_report.json (TFLite↔PyTorch)iat_llie_180x320_float32_accuracy_comparison_report.json

[Ultra experimental] PyTorch export example (yolox_s.onnx)

flatbuffer_direct can emit a native PyTorch package together with optional

TorchScript, Dynamo ONNX, and ExportedProgram artifacts in one run.

Generate all PyTorch-side artifacts plus TFLite and accuracy reports:

onnx2tf \

-i yolox_s.onnx \

-o tmp_yolox_s \

-tb flatbuffer_direct \

-cotof \

-fdopt \

-fdots \

-fdodo \

-fdoep

The output directory contains:

yolox_s_float32.tfliteyolox_s_float16.tfliteyolox_s_accuracy_report.json (ONNX↔TFLite)yolox_s_pytorch_accuracy_report.json (ONNX↔PyTorch)yolox_s_accuracy_comparison_report.jsonyolox_s_pytorch/

model.pyruntime.pystate_dict.pthmetadata.jsonyolox_s_pytorch/yolox_s_jit.ptyolox_s_pytorch/yolox_s_dynamo.onnxyolox_s_pytorch/yolox_s_ep.pt2

The generated PyTorch package is a normal torch.nn.Module package. You can

load it and run eager inference directly:

import sys

import torch

sys.path.append("tmp_yolox_s")

from yolox_s_pytorch import load_model

model = load_model(device="cpu", eval_mode=True)

x = torch.zeros((1, 3, 640, 640), dtype=torch.float32)

with torch.no_grad():

output = model(x)

print("input :", tuple(x.shape))

print("output:", tuple(output.shape))

print(model.forward_named(x).keys())

Expected output for the current yolox_s package:

input : (1, 3, 640, 640)

output: (1, 8400, 85)

dict_keys(['output'])

You can also load the bundled state_dict.pth explicitly with standard

PyTorch APIs:

import sys

from pathlib import Path

import torch

sys.path.append("tmp_yolox_s")

from yolox_s_pytorch.model import Model

package_dir = Path("tmp_yolox_s/yolox_s_pytorch")

model = Model(load_weights=False, eval_mode=True)

state_dict = torch.load(package_dir / "state_dict.pth", map_location="cpu")

model.load_state_dict(state_dict, strict=True)

The generated state_dict.pth is saved in load_state_dict-compatible format

for native PyTorch packages.

For native packages, raw torch.onnx.export(..., dynamo=True) and raw

torch.export.save(torch.export.export(...)) are intended to produce the same

graph structure as the helper-generated *_dynamo.onnx and *_ep.pt2.

Example: raw torch.onnx.export

from pathlib import Path

import importlib

import logging

import sys

import torch

package_dir = Path("tmp_yolox_s/yolox_s_pytorch").resolve()

sys.path.insert(0, str(package_dir.parent))

pkg = importlib.import_module(package_dir.name)

model = pkg.load_model(device="cpu", eval_mode=True)

model.eval()

example_inputs = (torch.randn(1, 3, 640, 640),)

logging.getLogger("torch.onnx._internal.exporter._registration").setLevel(logging.ERROR)

with torch.no_grad():

torch.onnx.export(

model,

example_inputs,

str(package_dir / "raw_dynamo.onnx"),

dynamo=True,

input_names=model.input_names,

output_names=model.output_names,

)

Example: raw torch.export.save

from pathlib import Path

import importlib

import sys

import torch

package_dir = Path("tmp_yolox_s/yolox_s_pytorch").resolve()

sys.path.insert(0, str(package_dir.parent))

pkg = importlib.import_module(package_dir.name)

model = pkg.load_model(device="cpu", eval_mode=True)

model.eval()

example_inputs = (torch.randn(1, 3, 640, 640),)

with torch.no_grad():

exported_program = torch.export.export(model, example_inputs)

torch.export.save(

exported_program,

str(package_dir / "raw_exported_program.pt2"),

)

Both examples require a concrete example input shape. For dynamic public inputs,

use the same concrete shape/data that you would provide to -fdodo or -fdoep

via --shape_hints, --test_data_nhwc_path, or -cind.

[!CAUTION]

Native PyTorch packages generated by --flatbuffer_direct_output_pytorch are intended primarily for inference, not for training as-is.

Detailed reasons:

- The exporter is designed to preserve inference behavior of the converted TFLite/ModelIR graph, not to reconstruct the original training-time PyTorch model semantics.

- The generated graph may include inference-oriented rewrites such as layout normalization, constant folding, reshapes/transposes inserted for runtime compatibility, and decomposition into primitive ops. These are correct for forward inference but are not guaranteed to be ideal or even stable for gradient-based optimization.

- Some generated models include postprocessing or task-specific inference logic such as

argmax, non_max_suppression, score filtering, indexing-heavy tensor selection, or shape-control branches. These are typically non-differentiable or poor training targets.

- Native export may emit helper paths that are semantically equivalent for inference but were not designed with training ergonomics in mind, such as strict shape/layout alignment, bridge shims for 1D/2D/3D convolution compatibility, or graph-local runtime helpers.

- Fallback-backed packages (

tflite, saved_model, string_normalizer) are wrappers around non-PyTorch execution backends and therefore should be treated as inference-only.

- Even native packages are emitted with

eval_mode=True as the normal usage path. The exporter does not currently guarantee training-safe reconstruction of modules such as normalization layers, recurrent state handling, or control-flow-heavy blocks in the same form expected by optimizers and schedulers.

state_dict.pth is load_state_dict-compatible for native packages, but compatibility of weight loading does not imply that the generated module is a faithful training architecture suitable for fine-tuning.

Practical guidance:

- Use generated PyTorch packages for inference validation, packaging, and side-by-side output comparison.

- If you want to train or fine-tune a model, treat the generated package as a reference implementation only, and rebuild or simplify the architecture into a training-oriented PyTorch model before optimization.

Click to expand

- Scope: ONNX ops listed in the `Supported layers` table above.

- Source of truth: `onnx2tf/tflite_builder/op_registry.py` and `--report_op_coverage` output.

- Current summary:

- Listed ONNX ops in the builtin table below: `192`

- Policy counts are generated in `*_op_coverage_report.json` (`schema_policy_counts`).

- Check each conversion run with `--report_op_coverage` for the latest numbers.

Notes:

- `flatbuffer_direct` supports only a subset of ONNX ops as TFLite builtins.

- Some ops are conditionally supported (rank/attribute/constant-input constraints).

- For model-specific results, use `--report_op_coverage` and check `*_op_coverage_report.json`.

Builtin supported (ONNX -> TFLite) in flatbuffer_direct

|ONNX OP|TFLite OP|Key constraints (flatbuffer_direct)|

|:-|:-|:-|

|Abs|ABS (or NEG + MAXIMUM for INT64)|For `INT64` input, lowered as `NEG + MAXIMUM` because TFLite `ABS` kernel does not support `INT64`|

|Acos|MUL + SUB + SQRT + ATAN2|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Acosh|SUB + ADD + SQRT + MUL + LOG|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Add|ADD|-|

|AffineGrid|BATCH_MATMUL + TRANSPOSE + RESHAPE|`size` input must be constant rank-1 length `4` or `5` with static positive values; `theta` must be rank-3 float tensor with shape `[N,2,3]` or `[N,3,4]`; output shape must match `size`; `align_corners` in `{0,1}`|

|And|LOGICAL_AND|-|

|ArgMax|ARG_MAX (+ optional RESHAPE for keepdims)|`axis` must be in range, `keepdims` must be `0` or `1`, `select_last_index=0`, output dtype must be `INT32` or `INT64`|

|ArgMin|ARG_MIN (+ optional RESHAPE for keepdims)|`axis` must be in range, `keepdims` must be `0` or `1`, `select_last_index=0`, output dtype must be `INT32` or `INT64`|

|Attention|RESHAPE + TRANSPOSE + BATCH_MATMUL + MUL + SOFTMAX + CAST|Canonical 3-input form only (`query/key/value`); single output only; `q_num_heads == kv_num_heads > 0`; `is_causal=0`; `qk_matmul_output_mode=0`; `softcap=0`; rank-3 float tensors only|

|Asin|MUL + SUB + SQRT + ATAN2|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Asinh|MUL + ADD + SQRT + LOG|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Atan|ATAN2|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Atanh|ADD + SUB + DIV + LOG + MUL|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|AveragePool|AVERAGE_POOL_2D (+ optional PAD/PADV2 + divisor correction DIV)|2D only (rank=4), `ceil_mode` in `{0,1}`, `count_include_pad` in `{0,1}`. Supports `auto_pad` in `{NOTSET,VALID,SAME_*,SAME_LOWER}` and explicit pads. For `count_include_pad=0` with non-zero effective pads, a correction path (`AVERAGE_POOL_2D` on mask + `DIV`) is applied|

|BatchNormalization|MUL + ADD|All parameter inputs (`scale`, `bias`, `mean`, `var`) must be constant|

|Bernoulli|SHAPE + RANDOM_UNIFORM + LESS (+ optional CAST)|Input dtype must be `FLOAT16/FLOAT32`; output dtype must be `BOOL` or numeric|

|BitShift|RIGHT_SHIFT (RIGHT) or MUL-based (LEFT)|LHS/RHS must be integer tensors, `direction` must be `LEFT` or `RIGHT`; `LEFT` requires constant shift input|

|BitwiseAnd|LOGICAL_AND|BOOL tensors only|

|BitwiseNot|LOGICAL_NOT / SUB + CAST|Input dtype must be BOOL or integer|

|BitwiseOr|LOGICAL_OR|BOOL tensors only|

|BitwiseXor|BITWISE_XOR|Input dtypes must match and be BOOL/integer|

|BlackmanWindow|CAST + SQUEEZE + RANGE + MUL + DIV + COS + SUB + ADD + MAXIMUM|Input must be scalar-like rank-1 length-1 integer tensor; output dtype must be `FLOAT16/FLOAT32`|

|Cast|CAST|-|

|CastLike|CAST|-|

|Ceil|CEIL|-|

|Celu|MAXIMUM + MINIMUM + DIV + EXP + SUB + MUL + ADD|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|CenterCropPad|SLICE + PAD (+ optional RESHAPE passthrough)|Target shape input must be constant rank-1; `axes` must be in range and length must match target shape; output rank must match input rank; string dtype unsupported in builtin path|

|Clip|RELU / RELU6 / MAXIMUM + MINIMUM|General constant clip ranges are supported via `MAXIMUM`/`MINIMUM` decomposition. ReLU fast-path: `min=0,max=+inf`; ReLU6 fast-path: `min=0,max=6`|

|Col2Im|RESHAPE + TRANSPOSE + TRANSPOSE_CONV + SLICE + CAST|Input/output dtype must be `FLOAT16/FLOAT32`; input/output ranks must be `3/4`; `image_shape` and `block_shape` must be constant 2-elements; static positive dimensions required|

|Concat|CONCATENATION|-|

|ConstantOfShape|CAST + FILL|Shape input must be rank-1 integer tensor; `value` attribute must be scalar (or omitted for zero-fill)|

|Conv|CONV_2D / DEPTHWISE_CONV_2D / CONV_3D|2D: rank=4, constant weights, grouped conv only regular/depthwise, zero pads or `auto_pad=SAME_*`. 3D: rank=5, constant rank-5 weights, `group=1`, strides/dilations length=3, `auto_pad` in `{NOTSET,VALID,SAME_UPPER}` (`SAME_LOWER` unsupported); explicit pads are handled via VALID+pad/crop path|

|ConvInteger|CAST + SUB + PAD + CONV_2D / DEPTHWISE_CONV_2D + TRANSPOSE|Input must be integer tensor, output dtype must be `INT32/INT64`, weights must be constant rank-4, and grouped conv must be regular/depthwise only|

|ConvTranspose|TRANSPOSE_CONV / CONV_3D_TRANSPOSE (+ optional ADD bias; 1D uses `EXPAND_DIMS/SQUEEZE` shim)|1D/2D/3D supported (1D: input rank=3 + weight rank=3 const, 2D: input rank=4 + weight rank=4 const, 3D: input rank=5 + weight rank=5 const), `group=1`, dilations must be all-ones, and `output_padding` must satisfy `0 <= output_padding < stride` (1D len=1, 2D len=2, 3D len=3). `auto_pad` supports `SAME_*`, `VALID`, and `NOTSET`; explicit non-zero pads are handled by post-crop when output shape is static|

|Compress|NOT_EQUAL + WHERE + RESHAPE + CAST + GATHER|Condition input must be rank-1 `BOOL` or integer tensor; `axis` may be omitted (flattened output) or be in range; when `axis` is specified and static, condition length must match that axis dimension|

|Cos|COS|-|

|Cosh|SUB + EXP + ADD + MUL|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|CumProd|RANGE + LESS/LESS_EQUAL + RESHAPE + TILE + FILL + SELECT_V2 + REDUCE_PROD (+ optional REVERSE_V2)|Input/output dtype must be `FLOAT16` or `FLOAT32`; input shape must be fully static positive; `axis` must be scalar constant (or attr) and in range; `exclusive`/`reverse` must be `0` or `1`|

|DFT|RESHAPE + BATCH_MATMUL + CONCATENATION (+ optional CAST)|Current builtin path supports real input `[..., N, 1]` only with `onesided=1`, `inverse=0`, and transform `axis=rank-2`; input/output shapes must be static positive; optional `dft_length` and `axis` inputs must be constant scalars; output shape must be `[..., N//2 + 1, 2]`|

|CumSum|CUMSUM|Input rank must be `>=1`; `axis` must be scalar constant (or attr) and in range; `exclusive`/`reverse` must be `0` or `1`|

|DeformConv|PAD + RESHAPE + TRANSPOSE + SHAPE + RANGE + SQUEEZE + GATHER + FLOOR + MAXIMUM/MINIMUM + CAST + MUL + ADD + SUB + BATCH_MATMUL|Constrained 2D float path only: input/output/offset/mask rank=4, input/offset/output(/mask) dtype `FLOAT16/FLOAT32`, weights and optional bias must be constant, kernel/channel/spatial dims must be static positive, and builtin lowering is limited to `group=1` and `offset_group=1` for LiteRT runtime safety. Grouped patterns remain custom-op candidates|

|DequantizeLinear|DEQUANTIZE|`scale` must be constant, `zero_point` (if provided) must be constant, per-axis `axis` must be in range|

|DepthToSpace|DEPTH_TO_SPACE (DCR) / RESHAPE + TRANSPOSE + RESHAPE (CRD)|Rank-4 only, `blocksize > 1`, `mode` in `{DCR,CRD}`|

|Det|GATHER + RESHAPE + MUL + SUB + ADD|Input/output dtype must be `FLOAT16/FLOAT32`; builtin lowering currently supports static square `2x2` / `3x3` matrices only|

|Div|DIV or MUL (when divisor is constant reciprocal)|For non-floating outputs, lowered as `CAST -> MUL(reciprocal) -> CAST` to preserve output dtype without using unsupported integer DIV paths|

|Dropout|RESHAPE (+ optional SHAPE + FILL for mask output)|Inference-time no-op in flatbuffer_direct; inputs `ratio`/`training_mode` are ignored|

|DynamicQuantizeLinear|NEG + REDUCE_MAX + MINIMUM + MAXIMUM + SUB + DIV + ADD + CAST|Input dtype must be `FLOAT16/FLOAT32`, output dtypes must be `Y=UINT8`, `Y_Scale=FLOAT16/FLOAT32`, `Y_ZeroPoint=UINT8`; scale/zero-point outputs must be scalar|

|Einsum|FULLY_CONNECTED|Rank-2 matmul-style equation only (`ij,jk->ik`), rhs input must be constant weights|

|Elu|ELU|-|

|Equal|EQUAL|-|

|Erf|ABS + SIGN + MUL + ADD + DIV + EXP + SUB|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Exp|EXP|-|

|Expand|RESHAPE + MUL (broadcast via const ones)|Output shape must be statically known, non-negative, and broadcast-compatible with input shape (current direct lowering uses static `RESHAPE + MUL`)|

|EyeLike|RESHAPE (from const eye)|Output must be rank-2 with fully static positive shape|

|Flatten|RESHAPE|Input rank must be >= 1|

|Floor|FLOOR|-|

|FusedConv|CONV_2D / DEPTHWISE_CONV_2D + fused activation|Supports `Relu/Tanh/Sigmoid/LeakyRelu/Clip/HardSigmoid` activations with valid scalar params; convolution constraints follow builtin Conv/FusedConv validator|

|FusedMatMul|BATCH_MATMUL (+ optional MUL for `alpha`)|Input rank >= 2, dtypes FLOAT16/FLOAT32 only, `transA/transB` must be 0 or 1, finite `alpha` required|

|Gather|GATHER|`batch_dims=0` only|

|GatherElements|CAST + RESHAPE + CONCATENATION + GATHER_ND|Data/indices ranks must match, output shape must equal indices shape, static positive output dims required, `axis` must be in range|

|GatherND|CAST + GATHER_ND|`batch_dims=0` only; indices must be integer type; indices last dim must be static positive and `<= params_rank`|

|Gelu|GELU|-|

|Gemm|FULLY_CONNECTED|Input rank=2, weight rank=2 + constant, `transA=0` only|

|Greater|GREATER|-|

|GreaterOrEqual|GREATER_EQUAL|-|

|GridSample|PAD + RESHAPE + TRANSPOSE + SQUEEZE + SLICE + ADD/SUB/MUL + FLOOR + MAXIMUM/MINIMUM + CAST + GATHER|Rank-4/5 with static positive dims; `mode=bilinear`; `padding_mode` in `{zeros,border}`; `align_corners` in `{0,1}`. When `grid` is graph input, keep shape metadata (e.g. `-kat grid`)|

|GlobalAveragePool|MEAN|Input rank must be `>=3`|

|GlobalLpPool|ABS + POW + SUM + RESHAPE (+ optional CAST)|Input rank must be `>=3`; input/output dtype must be `FLOAT16/FLOAT32`; `p` must be finite and `> 0`|

|GlobalMaxPool|REDUCE_MAX|Input rank must be `>=3`|

|GroupNormalization|RESHAPE + MEAN + SUB + MUL + ADD + SQRT + DIV (+ optional CAST)|Input/output dtype must be `FLOAT16/FLOAT32`; scale/bias must be constant length=`C`; `num_groups > 0` and must divide channel dim; channel/spatial dims must be static positive|

|GRU|TRANSPOSE + SLICE + SQUEEZE + BATCH_MATMUL + ADD + MUL + SUB + LOGISTIC + TANH + RESHAPE + CONCATENATION + EXPAND_DIMS|`layout=0`; `direction` in `{forward, reverse, bidirectional}`; `sequence_lens` unsupported; `W/R` must be constant rank-3; `linear_before_reset` in `{0,1}`; activations `[Sigmoid,Tanh]`; `clip=0`|

|Hardmax|TRANSPOSE + ARG_MAX + ONE_HOT|`axis` must be in range; target axis size must be static positive|

|HardSigmoid|MUL + ADD + MAXIMUM + MINIMUM|Input/output dtype must be FLOAT16 or FLOAT32|

|HardSwish|HARD_SWISH|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|HammingWindow|CAST + SQUEEZE + RANGE + MUL + DIV + COS + SUB + MAXIMUM|Input must be scalar-like rank-1 length-1 integer tensor; output dtype must be `FLOAT16/FLOAT32`|

|HannWindow|CAST + SQUEEZE + RANGE + MUL + DIV + COS + SUB + MAXIMUM|Input must be scalar-like rank-1 length-1 integer tensor; output dtype must be `FLOAT16/FLOAT32`|

|MelWeightMatrix|const-folded builtin tensor materialization|All five inputs must be constant scalars; output dtype must be `FLOAT16/FLOAT32`; output shape is `[dft_length // 2 + 1, num_mel_bins]`; requires `0 <= lower_edge_hertz < upper_edge_hertz <= sample_rate / 2`|

|Identity|RESHAPE|-|

|If|CONCATENATION + REDUCE_MAX + CAST + ADD + MUL + RESHAPE + NON_MAX_SUPPRESSION_V4/V5 + SLICE + GATHER + SHAPE + SUB + SELECT/SELECT_V2|Built-in lowering supports constrained patterns: NMS-guard pattern (empty then-branch + NMS else-branch), axis0 Add-branch pattern (single `Add` per branch, same trailing dims, different static first dim), SequenceConstruct Add-branch pattern (branch-local `Constant`/`Add` with terminal `SequenceConstruct`), and nested ReduceMin/Add pattern (else-branch `ReduceMin/Greater` with nested Add/Add `If`). In control-flow branch lowering, dynamic-condition `If` is additionally supported when both branches are single-output initializer-only constants (lowered via `Where`)|

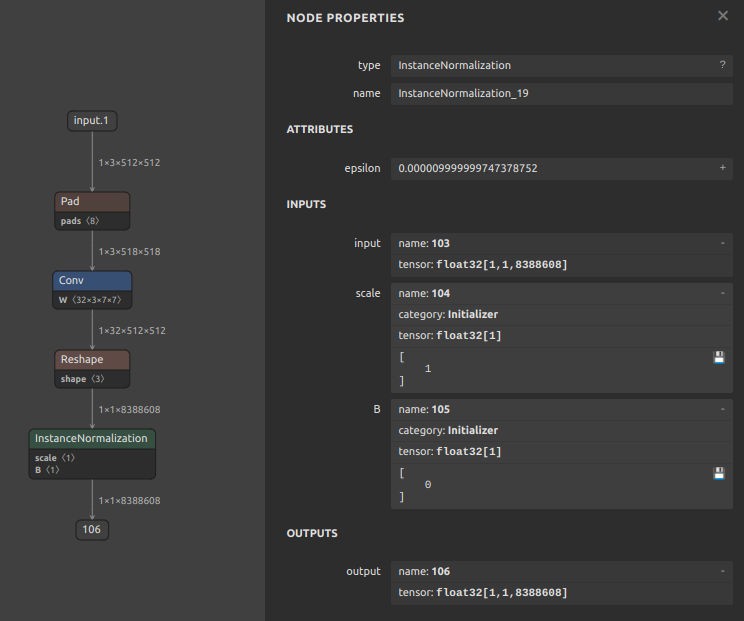

|InstanceNormalization|MEAN + SUB + MUL + MEAN + ADD + SQRT + DIV + MUL + ADD|Input/output dtype must be `FLOAT16` or `FLOAT32`; input rank must be `>=3`; `scale` and `bias` inputs must be constant|

|IsInf|ABS + EQUAL / LESS + GREATER + LOGICAL_AND|Input dtype must be `FLOAT16/FLOAT32`; output dtype must be `BOOL`; `detect_negative` / `detect_positive` are honored|

|IsNaN|NOT_EQUAL|Input dtype must be `FLOAT16/FLOAT32`; output dtype must be `BOOL`|

|MeanVarianceNormalization|MEAN + SUB + MUL + MEAN + ADD + SQRT + DIV|Input/output dtype must be `FLOAT16` or `FLOAT32`; `mvn_epsilon` is applied directly in builtin lowering; default axes follow ONNX channel-first semantics and rank `<3` reduces over axis `0`|

|Inverse|SLICE + MUL + SUB + ADD + NEG + CONCATENATION + DIV (+ optional CAST in/out)|Input/output dtype must be `FLOAT16` or `FLOAT32`; input rank must be `>=2`; matrix last dimensions must resolve to square `2x2` or `3x3`|

|LeakyRelu|LEAKY_RELU|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Less|LESS|-|

|LessOrEqual|LESS_EQUAL|-|

|Log|LOG|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|LogSoftmax|SOFTMAX + LOG (+ transpose in/out for non-last axis)|`axis` must be in range (negative axis normalized)|

|Loop|WHILE (+ subgraph-local ADD/LESS/LOGICAL_AND/RESHAPE and lowered body ops)|Built-in lowering supports either static-unroll patterns (constant `trip_count`/`cond`, loop-carried outputs only) or WHILE patterns with loop-carried outputs only (no scan outputs). `max_trip_count` input dtype must be `INT32` or `INT64`|

|LpPool|ABS + POW + AVERAGE_POOL_2D + MUL + RESHAPE (+ optional CAST)|Rank-4 only; input/output dtype must be `FLOAT16/FLOAT32`; `kernel_shape/strides/dilations` must be 2D; `dilations=[1,1]`; `p` must be finite and `> 0`; non-zero pads require `count_include_pad=1`|

|LpNormalization|L2_NORMALIZATION|`p=2`, `axis=last` only|

|LRN|LOCAL_RESPONSE_NORMALIZATION (+ transpose in/out)|Input rank must be 4, `size` must be a positive odd integer|

|LayerNormalization|MEAN + SUB + MUL + ADD + SQRT + DIV (+ optional CAST)|`axis` and `stash_type` are honored; optional ONNX outputs (`mean`, `inv_std_dev`) are supported|

|LSTM|UNIDIRECTIONAL_SEQUENCE_LSTM / BIDIRECTIONAL_SEQUENCE_LSTM + REVERSE_V2 + SPLIT + SQUEEZE + SLICE + RESHAPE/EXPAND_DIMS + CONCATENATION|`direction` in `{forward,reverse,bidirectional}`, `layout=0`, `input_forget=0`; `W/R` must be constant rank-3 with `num_directions` matching `direction`; optional `B` must be constant shape `[num_directions, 8*hidden_size]`; `initial_h/initial_c` are optional (when provided, shape must be `[num_directions, batch, hidden]`; runtime tensor inputs are supported); `sequence_lens` and peephole input `P` unsupported; projection (`R.shape[2] != hidden_size`) unsupported|

|MatMul|BATCH_MATMUL (+ CAST/RESHAPE/SQUEEZE helpers)|Supports standard rank>=2 matmul, vector lhs/rhs forms, vector dot, and scalar multiply patterns|

|MatMulInteger|CAST + SUB + BATCH_MATMUL|A/B input rank must be >=2 (rank=1 placeholder allowed), A/B dtypes must be integer tensor types (`INT8/UINT8/INT16/UINT16/INT32`), output dtype must be `INT32/INT64`; optional zero-point inputs must be scalar/1D and shape-compatible|

|Max|MAXIMUM (chained for >2 inputs)|At least 2 inputs|

|MaxPool|MAX_POOL_2D|2D only (rank=4), `ceil_mode` in `{0,1}`, zero pads or `auto_pad=SAME_*`|

|MaxRoiPool|TRANSPOSE + SLICE + MAX_POOL_2D + CONCATENATION|Current builtin path supports rank-4 input/output only with constant `rois`; input/output dtype must be `FLOAT16/FLOAT32`; all shapes must be static positive; `pooled_shape` must be length-2 positive and match output spatial dims; output channels must match input channels; output batch must equal the number of constant rois|

|MaxUnpool|CAST + RESHAPE + SCATTER_ND|Input/indices/output must be rank-4; input and indices shapes must match; input/output dtype must match and indices must be integer; output shape must be static positive with matching batch/channel; `kernel_shape` and `strides` must be length-2 positive; zero `pads` only; optional `output_shape` input must be a constant length-4 tensor matching the graph output shape|

|Mean|ADD + DIV (+ optional CAST)|All inputs and output must be `FLOAT16/FLOAT32`|

|NegativeLogLikelihoodLoss|TRANSPOSE + CAST + EQUAL + SELECT_V2 + ONE_HOT + MUL + SUM + SUB (+ optional GATHER/MEAN/DIV)|Input/output dtype must be `FLOAT16/FLOAT32`; target dtype must be integer; input rank must be `>=2` with static positive class dim at axis 1; optional weight must be rank-1 float tensor of length `C`; `reduction` in `{none,sum,mean}`; `ignore_index` supported|

|Min|MINIMUM (chained for >2 inputs)|At least 2 inputs|

|Mish|EXP + ADD + LOG + TANH + MUL|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Mod|FLOOR_MOD|`fmod=0` only|

|Mul|MUL|-|

|MultiHeadAttention|RESHAPE + TRANSPOSE + BATCH_MATMUL + MUL + SOFTMAX + CAST|`num_heads > 0`, `unidirectional=0`, query/key/value must be rank-3 same dtype (`FLOAT16/FLOAT32`), hidden dims must be static positive and divisible by `num_heads`|

|Neg|NEG|-|

|NonMaxSuppression|NON_MAX_SUPPRESSION_V4/V5 + SLICE + GATHER + SUB + CAST + RESHAPE + CONCATENATION (+ optional ARG_MAX + REDUCE_MAX)|Rank-3 boxes/scores only; `center_point_box=0`; currently `batch=1`; boxes last dim must be `4`; static positive `num_boxes`; `scores_shape[2] == boxes_shape[1]`; optional thresholds/max_output must be scalar constants; output dtype must be `INT32` or `INT64`; when `--output_nms_with_argmax` is disabled, class dim must be static positive (class dim `>1` is supported via class-wise NMS). `--switch_nms_version` (`-snms`) selects V4 or V5.|

|NonZero|NOT_EQUAL + WHERE + TRANSPOSE + CAST|Input rank must be `>=1`; output rank must be `2`|

|Not|LOGICAL_NOT|-|

|OneHot|CAST + ADD + FLOOR_MOD + ONE_HOT|`depth` input must be constant scalar and `>0`; `values` input must be constant 2-element tensor `[off_value,on_value]`; normalized `axis` must be in range|

|OptionalHasElement|const-fold (`BOOL` scalar)|Built-in lowering supports determinable-presence cases only: non-optional tensor inputs are folded to `true`; inputs produced by `Optional` (empty/value) are folded to `false/true`; runtime-optional graph inputs are not supported in builtin path|

|Or|LOGICAL_OR|-|

|Pad|PAD / PADV2 / MIRROR_PAD (+ dynamic pads bridge: CAST + RESHAPE + TRANSPOSE)|`mode` in `{constant,reflect}`; `reflect` is lowered to `MIRROR_PAD(REFLECT)`. `pads` may be constant or dynamic rank-1 tensor of length `2*rank` (integer type, internally cast to `INT32`). For `mode=constant`, constant zero is lowered as `PAD`; constant non-zero is lowered as `PADV2` (non-quantized tensors only)|

|Pow|POW|Output dtype must be `FLOAT16` or `FLOAT32`|

|PRelu|PRELU|`slope` must be constant (scalar or per-channel)|

|QGemm|FULLY_CONNECTED|Input rank=1 or 2, weight must be constant rank=2, bias must be constant, quantization params must be constant, `transA=0`, `transB` in `{0,1}`|

|QLinearAdd|ADD|All quantization params (`a/b/c scale`, `a/b/c zero_point`) must be constant|

|QLinearAveragePool|DEQUANTIZE + TRANSPOSE + AVERAGE_POOL_2D + TRANSPOSE + QUANTIZE|Input rank=4 only, all quantization params (`x scale/zero_point`, `y scale/zero_point`) must be constant, `kernel_shape/strides` must be 2D, `dilations=[1,1]`, `ceil_mode` in `{0,1}` (`ceil_mode=1` has stricter pad/auto_pad constraints), and `count_include_pad=0`|

|QLinearConcat|DEQUANTIZE + CONCATENATION + QUANTIZE|`y scale/zero_point` and each input triplet (`x scale/zero_point`) must be constant, input ranks must match, `axis` must be in range|

|QLinearConv|CONV_2D / DEPTHWISE_CONV_2D|Input/output rank=4, weight must be constant rank=4, all quantization params constant, group conv only regular/depthwise (depthwise detection uses `group` and weight shape), optional bias must be constant|

|QLinearGlobalAveragePool|AVERAGE_POOL_2D (preferred) / DEQUANTIZE + MEAN + QUANTIZE (fallback)|All quantization params (`x scale/zero_point`, `y scale/zero_point`) must be constant, input rank >= 3, `channels_last` must be 0 or 1. Quantized `AVERAGE_POOL_2D` path is used for rank-4 with static spatial dims and per-tensor quantization|

|QLinearLeakyRelu|DEQUANTIZE + PRELU + QUANTIZE|All quantization params (`x/y scale`, `x/y zero_point`) must be constant|

|QLinearMatMul|FULLY_CONNECTED|Input rank=1 or 2, weight must be constant rank=2, all quantization params constant|

|QLinearMul|MUL|All quantization params (`a/b/c scale`, `a/b/c zero_point`) must be constant|

|QLinearSigmoid|DEQUANTIZE + LOGISTIC + QUANTIZE|All quantization params (`x scale/zero_point`, `y scale/zero_point`) must be constant|

|QLinearSoftmax|DEQUANTIZE + SOFTMAX + QUANTIZE|All quantization params (`x/y scale`, `x/y zero_point`) must be constant; `axis` must be last dimension|

|QuantizeLinear|QUANTIZE|`scale` must be constant, `zero_point` (if provided) must be constant, per-axis `axis` must be in range|

|RandomNormal|RANDOM_STANDARD_NORMAL (+ optional MUL + ADD + CAST)|`shape` attribute must be present and non-empty; output dtype must be `FLOAT16/FLOAT32`; `seed` is mapped to TFLite random options when provided|

|RandomNormalLike|SHAPE + RANDOM_STANDARD_NORMAL (+ optional MUL + ADD + CAST)|Rank inferred from input shape; output dtype must be supported numeric type (`FLOAT16/FLOAT32/INT*`/`UINT*`). `seed` is mapped to TFLite random options when provided|

|RandomUniform|RANDOM_UNIFORM (+ optional MUL + ADD + CAST)|`shape` attribute must be present and non-empty; output dtype must be `FLOAT16/FLOAT32`; `seed` is mapped to TFLite random options when provided|

|RandomUniformLike|SHAPE + RANDOM_UNIFORM (+ optional MUL + ADD + CAST)|Input rank is used only to materialize the runtime shape; output dtype must be `FLOAT16/FLOAT32`; `seed` is mapped to TFLite random options when provided|

|Range|CAST + SQUEEZE + RANGE|Each of `start/limit/delta` must be scalar-like rank-1 length-1 tensor|

|Reciprocal|DIV|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|ReduceL1|ABS + SUM|Reduce axes must be constant when provided via input tensor|

|ReduceL2|MUL + SUM + SQRT + CAST|Reduce axes must be constant when provided via input tensor|

|ReduceLogSum|SUM + LOG (+ optional CAST)|Input/output dtype must be `FLOAT16/FLOAT32`; reduce axes must be constant when provided via input tensor|

|ReduceLogSumExp|EXP + SUM + LOG (+ optional CAST)|Input/output dtype must be `FLOAT16/FLOAT32`; reduce axes must be constant when provided via input tensor|

|ReduceMax|REDUCE_MAX|Reduce axes must be constant when provided via input tensor|

|ReduceMean|MEAN|Reduce axes must be constant when provided via input tensor|

|ReduceMin|REDUCE_MIN|Reduce axes must be constant when provided via input tensor|

|ReduceProd|REDUCE_PROD|Reduce axes must be constant when provided via input tensor|

|ReduceSumSquare|MUL + SUM (+ optional CAST)|Input/output dtype must be `FLOAT16/FLOAT32`; reduce axes must be constant when provided via input tensor|

|ReduceSum|SUM|Reduce axes must be constant when provided via input tensor|

|Relu|RELU|-|

|Reshape|RESHAPE|Shape input must be constant|

|Resize|RESIZE_NEAREST_NEIGHBOR / RESIZE_BILINEAR / (cubic) RESHAPE + BATCH_MATMUL + RESHAPE + BATCH_MATMUL|Rank-4 only. `nearest`/`linear`: builtin resize path (limited attr combinations), parameters must be constant `scales/sizes` or dynamic rank-1 integer `sizes` (INT32/INT64). `cubic`: strict ONNX cubic decomposition (no FlexResizeBicubic), supports `coordinate_transformation_mode` in `{align_corners, asymmetric, half_pixel, pytorch_half_pixel}` and honors `cubic_coeff_a`/`exclude_outside`; requires static input C/H/W and static output H/W. Batch dimension is preserved through the cubic decomposition path|

|ReverseSequence|CAST + REVERSE_SEQUENCE|Input rank must be `>=2`; `seq_lengths` must be rank-1 integer tensor; `batch_axis`/`time_axis` must be in range and different|

|RoiAlign|CAST + GATHER + PAD + RESHAPE + ADD/SUB/MUL/DIV + MAXIMUM/MINIMUM + FLOOR + TILE + AVERAGE_POOL_2D / MAX_POOL_2D + TRANSPOSE|Input/output rank=4 only; `rois` rank=2 (`[...,4]`), `batch_indices` rank=1 integer; input `C/H/W` must be static positive; `mode` in `{avg,max}`; `coordinate_transformation_mode` in `{half_pixel,output_half_pixel}`; `output_height/output_width` must be positive|

|RotaryEmbedding|TRANSPOSE + SLICE + RESHAPE + CAST + MUL + SUB + ADD + CONCATENATION|Current builtin path supports rank-4 input/output only, `interleaved=0`, and no `position_ids` input; all tensor dtypes must be `FLOAT16/FLOAT32` with output dtype matching input; shapes must be static positive; `cos/sin` must be rank-2 with shape `[seq_len, rotary_embedding_dim/2]`; `rotary_embedding_dim` must be even and `<= head_size`|

|RNN|UNIDIRECTIONAL_SEQUENCE_RNN + REVERSE_V2 + CONCATENATION + TRANSPOSE + EXPAND_DIMS + SLICE + SQUEEZE + RESHAPE|`direction` in `{forward,reverse,bidirectional}`, `layout=0`; `sequence_lens` unsupported; `W/R` must be constant rank-3 with `num_directions` matching `direction`; optional `B` must be constant shape `[num_directions, 2*hidden_size]`; optional `initial_h` shape must be `[num_directions, batch, hidden]` (runtime tensor inputs are supported); activations in `{tanh,relu,sigmoid}`; `clip=0`|

|Round|ROUND|-|

|Scatter|CAST + LESS + SELECT + SHAPE + GATHER + RANGE + RESHAPE + TILE + CONCATENATION + MUL + ADD + SUB + FILL + SCATTER_ND|Alias of `ScatterElements`; `reduction=none` only; `axis` must be in range; `indices` dtype must be integer; `updates/output` dtype must match `data` dtype|

|ScatterND|CAST + SHAPE + FILL + MUL + SCATTER_ND + SUB + ADD|`reduction=none` only; data/updates/output dtypes must match (numeric), indices dtype must be integer, indices last dim must be static positive and `<= data rank`|

|ScatterElements|CAST + LESS + SELECT + SHAPE + GATHER + RANGE + RESHAPE + TILE + CONCATENATION + MUL + ADD + SUB + FILL + SCATTER_ND|`reduction=none` only; `data/indices/updates` must have same rank; `axis` must be in range; `indices` dtype must be integer; `updates/output` dtype must match `data` dtype; output shape must match `data` shape|

|TensorScatter|CAST + GATHER + RESHAPE + ADD (+ FLOOR_MOD for `mode=circular`) + CONCATENATION + FILL + MUL + SUB + SCATTER_ND|`data/updates/output` rank must match and `updates` shape must be static positive; `axis` must be in range; `mode` in `{linear,circular}`; optional `write_indices` must be rank-1 integer tensor with length `>= updates.shape[0]`; `output` dtype must match `data` dtype; `mode=circular` requires static positive axis dim|

|Selu|MAXIMUM + MINIMUM + EXP + SUB + MUL + ADD|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Shape|SHAPE (+ SLICE for `start/end`)|Output dtype must be `INT32` or `INT64`; `start/end` slicing follows ONNX normalization|

|Shrink|ADD + SUB + LESS + GREATER + SELECT_V2 (+ optional CAST)|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Sigmoid|LOGISTIC|-|

|Sign|SIGN|-|

|Sin|SIN|-|

|Sinh|SUB + EXP + MUL|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Size|SHAPE + REDUCE_PROD (+ optional CAST)|Computes tensor element count via `Shape -> ReduceProd`; output dtype follows ONNX output type (`INT32/INT64`)|

|StringNormalizer|RESHAPE (no-op) / EQUAL + LOGICAL_OR + LOGICAL_NOT + WHERE + GATHER (+ EXPAND_DIMS for rank-2) / const-fold|Input/output dtype must be `STRING`, `locale` must be `''` or `en_US`. Runtime path supports only `case_change_action=NONE` (or empty). For non-constant input, stopword filtering is supported only when `is_case_sensitive=1` and input rank is 1 or 2 (`rank=2` follows current onnx2tf behavior and processes the first row). Constant-input path is folded at conversion time and supports `LOWER/UPPER`, case-insensitive matching, and empty-result fallback (`""`) semantics.|

|Slice|SLICE / STRIDED_SLICE / REVERSE_V2|`starts` must be constant input/attr. `ends` is usually constant input/attr; dynamic `ends` is supported only for rank-1 axis-0 prefix slice (`start=0`, `step=1`). `steps=0` unsupported. Negative `steps` are supported only for full-axis reverse pattern (`start=-1`, very negative `end`, `step=-1`) via `REVERSE_V2`|

|Softmax|SOFTMAX (+ transpose in/out for non-last axis)|`axis` must be in range (negative axis normalized)|

|SoftmaxCrossEntropyLoss|TRANSPOSE + SOFTMAX + LOG + CAST + EQUAL + SELECT_V2 + ONE_HOT + MUL + SUM + SUB (+ optional GATHER/MEAN/DIV)|Input/output dtype must be `FLOAT16/FLOAT32`; labels dtype must be integer; input rank must be `>=2` with static positive class dim at axis 1; optional weight must be rank-1 float tensor of length `C`; `reduction` in `{none,sum,mean}`; optional output[1] log-prob tensor is supported|

|Softplus|EXP + ADD + LOG|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Softsign|ABS + ADD + DIV|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|STFT|SLICE + MUL + RESHAPE + BATCH_MATMUL + CONCATENATION (+ optional CAST)|Current builtin path supports rank-2 signal input only with `onesided=1`; `frame_step`, `window`, and `frame_length` inputs must be constant; shapes must be static positive with `signal_length >= frame_length`; `window` length must equal `frame_length`; output shape must be `[batch, num_frames, frame_length//2 + 1, 2]`|

|SpaceToDepth|SPACE_TO_DEPTH|`blocksize > 1`, rank=4 (NCHW)|

|Split|SLICE|`axis` must be in range; explicit split sizes (input/attr) must be constant and count must match outputs; without explicit split sizes, axis dim must be known and divisible by output count|

|Sqrt|SQRT|-|

|Squeeze|SQUEEZE|Axes must be constant when provided via input tensor|

|Sub|SUB|-|

|Sum|ADD (chained for >2 inputs)|At least 2 inputs|

|Tan|SIN + COS + DIV|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Tanh|TANH|-|

|ThresholdedRelu|GREATER + CAST + MUL|Input/output dtype must be `FLOAT16` or `FLOAT32`|

|Tile|CAST + TILE|`multiples` must be rank-1 integer tensor; if input rank is static, `len(multiples)` must match input rank; constant `multiples` must be non-negative|

|TopK|TOPK_V2 (+ optional TRANSPOSE + NEG + CAST + SQUEEZE)|Input rank must be `>=1`; input dtype must be `FLOAT16/FLOAT32`; `axis` must be in range; `largest` must be `0` or `1`; `sorted` must be `1`; `k` must be scalar-like (`[]` or `[1]`) and integer dtype; indices output dtype must be `INT32` or `INT64`|

|Transpose|TRANSPOSE|Permutation input must be constant|

|Trilu|MUL / LOGICAL_AND|Input rank must be `>=2`; matrix dims must be static positive; optional `k` input must be constant|

|Unique|CAST + FLOOR_MOD + UNIQUE + CONCATENATION|Input dtype must be integer; output[0] dtype must be integer; `sorted` must be `0` or `1`; when `axis` is specified, only `axis=0` is supported and input must be rank-2 with static positive second dimension; builtin path supports output[0] only (other outputs must be unused)|

|Unsqueeze|RESHAPE|Axes must be constant and unique. Axis normalization follows output-rank semantics (`output_rank = input_rank + len(axes)`), so opset8-style patterns such as `input_rank=2, axes=[2,3]` are supported|

|Upsample|RESIZE_NEAREST_NEIGHBOR / RESIZE_BILINEAR / (cubic) RESHAPE + BATCH_MATMUL + RESHAPE + BATCH_MATMUL|Legacy alias of `Resize` lowered by the same builder. Rank-4 only; supports constrained `nearest`/`linear` builtin resize and constrained `cubic` decomposition path. Parameter input follows `Upsample` 2-input form (`scales`/`sizes`) with the same constant/dynamic integer constraints as `Resize`|

|Where|CAST + SELECT|Condition input dtype must be BOOL or numeric|

|Xor|NOT_EQUAL|-|

Custom-op candidates in flatbuffer_direct (opt-in)

|ONNX OP|Default policy|When enabled|

|:-|:-|:-|

|DeformConv|builtin_supported on constrained standard 2D float pattern (`group=1`, `offset_group=1`); otherwise explicit_error (`custom_op_candidate_disabled`)|Grouped or otherwise unsupported patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|DynamicQuantizeLinear|builtin_supported on constrained float-input/uint8-output pattern; otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|Einsum|builtin_supported on constrained equations; otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported equations can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|GridSample|builtin_supported on constrained rank-4/5 bilinear pattern; otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported GridSample patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|If|builtin_supported on constrained patterns (NMS-guard, axis0 Add-branch, SequenceConstruct Add-branch, and nested ReduceMin/Add); otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported If patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|LogSoftmax|builtin_supported on constrained axis pattern; otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|Loop|builtin_supported on constrained patterns (static-unroll / WHILE loop-carried forms); otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported Loop patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|LSTM|builtin_supported on constrained forward/reverse/bidirectional pattern; otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|NonMaxSuppression|builtin_supported on constrained rank-3 boxes/scores pattern; otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|QLinearConv|builtin_supported on constrained regular/depthwise pattern; otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported grouped patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|RoiAlign|builtin_supported on constrained pattern; otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported RoiAlign patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|Scan|explicit_error (`custom_op_candidate_disabled`)|Lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|ScatterElements|builtin_supported on constrained pattern; otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported ScatterElements patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|SequenceAt|explicit_error (`custom_op_candidate_disabled`)|Lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|SequenceConstruct|explicit_error (`custom_op_candidate_disabled`)|Lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|SequenceErase|explicit_error (`custom_op_candidate_disabled`)|Lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|SequenceInsert|explicit_error (`custom_op_candidate_disabled`)|Lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|SequenceLength|explicit_error (`custom_op_candidate_disabled`)|Lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|TopK|builtin_supported on constrained float-input/scalar-k pattern; otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

|Unique|builtin_supported on constrained integer pattern (output[0]-only); otherwise explicit_error (`custom_op_candidate_disabled`)|Unsupported patterns can be lowered to TFLite `CUSTOM` when `--flatbuffer_direct_allow_custom_ops` is enabled and allowlist passes|

Demo

Video speed is adjusted approximately 50 times slower than actual speed.

Environment

- Linux / Windows

- onnx==1.20.1

- onnxruntime==1.24.3

- onnxsim-prebuilt==0.4.39.post2

- onnxoptimizer==0.4.2

- sne4onnx>=2.0.1

- sng4onnx>=2.0.1

- tensorflow==2.19.0

- tf-keras==2.19.0

- ai-edge-litert==2.1.2

- h5py==3.12.1

- psutil==5.9.5

- ml_dtypes==0.5.1

- flatbuffers==25.12.19

Sample Usage

1. Install

Note:

1. If you are using TensorFlow v2.13.0 or earlier, use a version older than onnx2tf v1.17.5. onnx2tf v1.17.6 or later will not work properly due to changes in TensorFlow’s API.

2. The latest onnx2tf implementation is based on Keras API 3 and will not work properly if you install TensorFlow v2.15.0 or earlier.

3. Starting with onnx2tf v2.0.0, due to onnxruntime issues, onnx2tf will no longer support environments older than Python 3.10. Accordingly, the Docker Image has been upgraded to Ubuntu 24.04. The dependency on onnx-graphsurgeon has also been completely removed. onnxruntime v1.24.1: https://github.com/microsoft/onnxruntime/releases/tag/v1.24.1

- HostPC

Click to expand

- When using GHCR, see `Authenticating to the Container registry`

https://docs.github.com/en/packages/working-with-a-github-packages-registry/working-with-the-container-registry#authenticating-to-the-container-registry

```bash

# PAT authentication is required to pull from GHCR.

docker login ghcr.io

Username (xxxx): {Enter}

Password: {Personal Access Token}

Login Succeeded

# Start an interactive session on the terminal.

docker run --rm -it \

-v `pwd`:/workdir \

-w /workdir \

ghcr.io/pinto0309/onnx2tf:2.3.16

or

# Authentication is not required for pulls from Docker Hub.

# Start an interactive session on the terminal.

docker run --rm -it \

-v `pwd`:/workdir \

-w /workdir \

docker.io/pinto0309/onnx2tf:2.3.16

or

# Direct execution in Docker

# The model conversion is performed within Docker,

# but the model is output to the host PC's storage.

docker run --rm \

--user $(id -u):$(id -g) \

-v $(pwd):/work \

docker.io/pinto0309/onnx2tf:2.3.16 \

onnx2tf -i /work/densenet-12.onnx -o /work/saved_model

or

curl -LsSf https://astral.sh/uv/install.sh | sh

uv python install 3.12.12

uv venv -p 3.12.12 .venv

source .venv/bin/activate

uv pip install -U onnx2tf

or

curl -LsSf https://astral.sh/uv/install.sh | sh

uv python install 3.12.12

uv venv -p 3.12.12 .venv

source .venv/bin/activate

uv sync

or

pip install -e .

or

docker buildx build \

--platform linux/amd64 \

--build-arg BUILD_ARCH=linux/amd64 \

--progress=plain \

-t onnx2tf:amd64 \

--load .

or

# It is possible to cross-compile an arm64 environment on an x64 environment.

docker buildx build \

--platform linux/arm64 \

--build-arg BUILD_ARCH=linux/arm64 \

--progress=plain \

-t onnx2tf:arm64 \

--load .

```

2. Run test

Only patterns that are considered to be used particularly frequently are described. In addition, there are several other options, such as disabling Flex OP and additional options to improve inference performance. See: CLI Parameter

# Float32, Float16

# This is the fastest way to generate tflite.

# Improved to automatically generate `signature` without `-osd` starting from v1.25.3.

# Also, starting from v1.24.0, efficient TFLite can be generated

# without unrolling `GroupConvolution`. e.g. YOLOv9, YOLOvN

# Conversion to other frameworks. e.g. TensorFlow.js, CoreML, etc

# https://github.com/PINTO0309/onnx2tf#19-conversion-to-tensorflowjs

# https://github.com/PINTO0309/onnx2tf#20-conversion-to-coreml

wget https://github.com/PINTO0309/onnx2tf/releases/download/0.0.2/resnet18-v1-7.onnx

onnx2tf -i resnet18-v1-7.onnx

ls -lh saved_model/

assets

fingerprint.pb

resnet18-v1-7_float16.tflite

resnet18-v1-7_float32.tflite

saved_model.pb

variables

TF_CPP_MIN_LOG_LEVEL=3 \

saved_model_cli show \

--dir saved_model \

--signature_def serving_default \

--tag_set serve

The given SavedModel SignatureDef contains the following input(s):

inputs['data'] tensor_info:

dtype: DT_FLOAT

shape: (-1, 224, 224, 3)

name: serving_default_data:0

The given SavedModel SignatureDef contains the following output(s):

outputs['output_0'] tensor_info:

dtype: DT_FLOAT

shape: (-1, 1000)

name: PartitionedCall:0

Method name is: tensorflow/serving/predict

# In the interest of efficiency for my development and debugging of onnx2tf,

# the default configuration shows a large amount of debug level logs.

# However, for most users, a large number of debug logs are unnecessary.

# If you want to reduce the amount of information displayed in the conversion log,

# you can change the amount of information in the log by specifying the

# `--verbosity` or `-v` option as follows.

# Possible values are "debug", "info", "warn", and "error".

wget https://github.com/PINTO0309/onnx2tf/releases/download/0.0.2/resnet18-v1-7.onnx

onnx2tf -i resnet18-v1-7.onnx -v info

# Override undefined batch size or other dimensions with static values.

# If the model has undefined dimensions, rewriting them to a static size will significantly

# improve the success rate of the conversion.

# The `-b` option overwrites the zero-dimensional batch size with the number specified

# without input OP name.

# Note that if there are multiple input OPs, the zero dimension of all input OPs is

# forced to be rewritten.

# The `-sh/--shape-hints` option provides shape hints for input tensors with undefined

# dimensions, significantly improving the conversion success rate for models with dynamic

# input shapes. Specifying this option in combination with the `-b` option will further

# improve the success rate of model conversion. The `-sh` option does not change ONNX

# input OPs to static shapes.

# The `-ois/--overwrite_input_shape` option allows undefined dimensions in all dimensions,

# including the zero dimensionality, to be overwritten to a static shape, but requires

# the input OP name to be specified.

# e.g. -ois data1:1,3,224,224 data2:1,255 data3:1,224,6

wget https://github.com/PINTO0309/onnx2tf/releases/download/0.0.2/resnet18-v1-7.onnx

onnx2tf -i resnet18-v1-7.onnx -b 1

or

onnx2tf -i resnet18-v1-7.onnx -sh data:1,3,224,224 -b 1

or

onnx2tf -i resnet18-v1-7.onnx -ois data:1,3,224,224

# Suppress automatic transposition of input OPs from NCW, NCHW, NCDHW to NWC, NHWC, NDHWC.

# onnx2tf is a specification that automatically transposes the input OP to [N,H,W,C] format

# before converting the model. However, since onnx2tf cannot determine from the structure of

# the model whether the input data is image, audio data, or something else, it unconditionally

# transposes the channels. Therefore, it is the models of STT/TTS models where the input is

# not NHWC that tend to have particular problems with the automatic transposition of the

# input OP.

# If you do not want input OPs to be automatically transposed, you can disable automatic

# transposition of input OPs by specifying the `-kat` option.

wget https://github.com/PINTO0309/onnx2tf/releases/download/1.1.28/double_gru.onnx

# INPUT OPs: "spec": float32[1,3,257,1], "states_in": float32[2,1,32]

# The following command suppresses the automatic transposition of "states_in" and converts it.

onnx2tf -i double_gru.onnx -kat states_in

# Keras h5 format

# .h5, .json, .keras, .weights.h5, .weights.keras, .data-00000-of-00001, .index

wget https://github.com/PINTO0309/onnx2tf/releases/download/0.0.2/resnet18-v1-7.onnx

onnx2tf -i resnet18-v1-7.onnx -oh5

# Keras keras_v3 format (TensorFlow v2.12.0 or later only)

wget https://github.com/PINTO0309/onnx2tf/releases/download/0.0.2/resnet18-v1-7.onnx

onnx2tf -i resnet18-v1-7.onnx -okv3

# TensorFlow v1 (.pb) format

wget https://github.com/PINTO0309/onnx2tf/releases/download/0.0.2/resnet18-v1-7.onnx

onnx2tf -i resnet18-v1-7.onnx -otfv1pb

# Automatic JSON generation only

# Generates an optimal parameter replacement JSON file for model conversion.

# The JSON file is saved to {model_name}_auto.json when conversion errors occur

# or accuracy issues are detected and the feature is explicitly enabled.

onnx2tf -i model.onnx -agj

# Accuracy validation only (no JSON generation)

# Validates the accuracy between ONNX and TensorFlow outputs without generating

# any parameter replacement JSON file.

onnx2tf -i model.onnx -cotof

# Accuracy validation + automatic JSON generation

# First generates an optimal parameter replacement JSON file, then uses it

# to validate the model accuracy. This ensures the best possible conversion accuracy.

onnx2tf -i model.onnx -agj -cotof

# Accuracy validation with opt-in JSON generation on error

# Generates a parameter replacement JSON only when accuracy errors greater than 1e-2

# are detected during validation.

onnx2tf -i model.onnx -cotof -agje

# INT8 Quantization, Full INT8 Quantization

# INT8 Quantization with INT16 activation, Full INT8 Quantization with INT16 activation

# Dynamic Range Quantization

wget https://github.com/PINTO0309/onnx2tf/releases/download/1.1.1/emotion-ferplus-8.onnx

# INT8 Quantization (per-channel)

onnx2tf -i emotion-ferplus-8.onnx -oiqt

# INT8 Quantization (per-tensor)

onnx2tf -i emotion-ferplus-8.onnx -oiqt -qt per-tensor

# Split the model at the middle position for debugging

# Specify the input name of the OP

wget https://github.com/PINTO0309/onnx2tf/releases/download/1.25.0/cf_fus.onnx

onnx2tf -i cf_fus.onnx -inimc 448

# Split the model at the middle position for debugging

# Specify the output name of the OP

wget https://github.com/PINTO0309/onnx2tf/releases/download/1.25.0/cf_fus.onnx

onnx2tf -i cf_fus.onnx -onimc dep_sec

# Split the model at the middle position for debugging

# Specify the input/output name of the OP

wget https://github.com/PINTO0309/onnx2tf/releases/download/1.25.0/cf_fus.onnx

onnx2tf -i cf_fus.onnx -inimc 448 -onimc velocity

# Suppress generation of Flex OP and replace with Pseudo-Function

# [

# Asin, Acos, Atan, Abs, PReLU,

# LeakyReLU, Power, GatherND,

# Neg, HardSwish, Erf, GeLU, MatMulInteger,

# ]

# Below is a sample of replacing Erf with another set of operations.

wget https://s3.ap-northeast-2.wasabisys.com/temp-models/onnx2tf_readme/Erf_11.onnx

onnx2tf -i Erf_11.onnx -rtpo Erf

# High-dimensional Transpose decomposition

# If you do not like FlexTranspose being generated, try `-nodaftc`.

# Suppresses the generation of FlexTranspose by decomposing Transpose

# to the specified number of dimensions.

# In TensorFlow v2.12.0 and later, up to 6 dimensions are converted to normal Transpose;

# in v2.11.0 and earlier, up to 5 dimensions are converted to normal Transpose.

# Note that specifying `2` for the `-nodaftc` option causes all Transpose OPs to disappear

# from the model structure.

# Below is an example of decomposing a Transpose of 5 or more dimensions into a Transpose

# of 4 dimensions.

onnx2tf -i xxxx.onnx -nodaftc 4

# High-dimensional Slice(StridedSlice) decomposition

# If your special circumstances do not allow you to deploy a `StridedSlice` with more than

# 5 dimensions to a device, you can use the `-nodafsc` option to decompose the `StridedSlice`

# into a process with 4 or fewer dimensions.

# Below is an example of decomposing a `StridedSlice` of 5 or more dimensions into a

# `StridedSlice` of 4 dimensions.

onnx2tf -i xxxx.onnx -nodafsc 4

# Float16 inference doubling on devices with ARM64 ARMv8.2 or higher instruction set

# Double the inference speed with Float16 precision tflite models on devices with

# high-performance CPUs such as Snapdragon.

# (Pixel 3a, Pixel 5a, Pixel 7, Galaxy M12 and Galaxy S22, ...)

# XNNPACK float16 inference on certain ARM64 cores is 2x faster.

# Unfortunately, Float16 inference cannot be accelerated when using the RaspberryPi4's

# ARM64 CPU.

onnx2tf -i xxxx.onnx -eatfp16

# Parameter replacement (Resize,Transpose,Softmax)

rm replace.json

wget https://github.com/PINTO0309/onnx2tf/releases/download/1.1.27/human_segmentation_pphumanseg_2021oct.onnx

wget https://github.com/PINTO0309/onnx2tf/releases/download/1.1.27/replace.json

onnx2tf -i human_segmentation_pphumanseg_2021oct.onnx -prf replace.json

3. Accuracy check

Click to expand

Perform error checking of ONNX output and TensorFlow output. Verify that the error of all outputs, one operation at a time, is below a certain threshold. Automatically determines before and after which OPs the tool's automatic conversion of the model failed. Know where dimensional compression, dimensional expansion, and dimensional transposition by `Reshape` and `Traspose` are failing. Once you have identified the problem area, you can refer to the tutorial on [Parameter replacement](#parameter-replacement) to modify the tool's behavior.

After many upgrades, the need for JSON parameter correction has become much less common, but there are still some edge cases where JSON correction is required. If the PC has sufficient free space in its RAM, onnx2tf will convert the model while carefully performing accuracy checks on all OPs. Thus, at the cost of successful model conversion, the conversion speed is a little slower. If the amount of RAM required for the accuracy check is expected to exceed 80% of the total available RAM capacity of the entire PC, the conversion operation will be performed without an accuracy check. Therefore, if the accuracy of the converted model is found to be significantly degraded, the accuracy may be automatically corrected by re-conversion on a PC with a large amount of RAM. For example, my PC has 128GB of RAM, but the StableDiffusion v1.5 model is too complex in its structure and consumed about 180GB of RAM in total with 50GB of SWAP space.

`-ois` an option to overwrite the input OP to a static size if it has undefined dimensions. `-cotof` option checks the accuracy of all OPs one by one. `-cotoa` is the error value of the threshold for determining an accuracy error. If there are undefined dimensions in the input OP, it is better to fix them to the static geometry to improve the accuracy of the accuracy measurement.

Also, you can use the `-cind` option to specify custom input for `-cotof`, instead of using the default dummy input. Otherwise, all input values will be set to 1. You can override the dummy input values with `--value_hints` (scalar only, `*:default` supported). For more information about the `-cind` option, please refer to [here](#cli-parameter). If your input is image data in NHWC format, you can also use `--test_data_nhwc_path` to provide fixed test samples for validation. For `-fdots`, the recommended way to resolve dynamic trace shapes is `--shape_hints`. `--test_data_nhwc_path` is also accepted for eligible 4D RGB inputs, and `-cind` remains available when per-input custom data is needed.

Quick difference between `-tdnp` and `-cind`:

- `-tdnp` (`--test_data_nhwc_path`): Validation-only test data for accuracy checks. Expects one NHWC RGB `.npy` (`[N,H,W,3]`). No `mean/std`. For multi-input models, this single array is reused across inputs (per-input mapping is not supported). Also accepted by `-fdots` for eligible 4D RGB inputs.

- `-cind` (`--custom_input_op_name_np_data_path`): Per-input custom data mapping by input name. Supports multi-input/non-image inputs. Also used for `-fdots` trace inputs and INT8 calibration (`-oiqt`) with optional `mean/std`.

The `-cotof` option evaluates Float32 accuracy only. In the default path it checks ONNX against TensorFlow/TFLite outputs. When `--tflite_backend flatbuffer_direct` is used, the base report is ONNX↔TFLite. If `--flatbuffer_direct_output_pytorch` is also enabled, onnx2tf additionally emits ONNX↔PyTorch and combined comparison reports using the same input samples.

```

onnx2tf -i mobilenetv2-12.onnx -ois input:1,3,224,224 -cotof -cotoa 1e-1

or

onnx2tf -i mobilenetv2-12.onnx -b 1 -cotof -cotoa 1e-1

or

onnx2tf -i mobilenetv2-12.onnx -cotof -cotoa 1e-1 -cind "input" "/your/path/x.npy"

or

onnx2tf -i mobilenetv2-12.onnx -cotof -cotoa 1e-1 -tdnp "/your/path/test_data_nhwc.npy"

or

onnx2tf -i mobilenetv2-12.onnx -cotof -cotoa 1e-1 --value_hints "input:0.5" "*:1.0"

```

Click to expand

If you want to match tflite's input/output OP names and the order of input/output OPs with ONNX, you can use the `interpreter.get_signature_runner()` to infer this after using the `-coion` / `--copy_onnx_input_output_names_to_tflite` option to output tflite file. See: https://github.com/PINTO0309/onnx2tf/issues/228

onnx2tf automatically compares the final input/output shapes of ONNX and the generated TFLite and tries to automatically correct the input/output order as much as possible if there is a difference. However, if INT8 quantization is used and there are multiple inputs and outputs with the same shape, automatic correction may fail. This is because TFLiteConverter shuffles the input-output order by itself only when INT8 quantization is performed.

```python

import torch

import onnxruntime

import numpy as np

import onnx2tf

import tensorflow as tf

from ai_edge_litert.interpreter import Interpreter

class Model(torch.nn.Module):

def forward(self, x, y):

return {

"add": x + y,

"sub": x - y,

}

# Let's double check what PyTorch gives us

model = Model()

pytorch_output = model.forward(10, 2)

print("[PyTorch] Model Predictions:", pytorch_output)

# First, export the above model to ONNX

torch.onnx.export(

Model(),

{"x": 10, "y": 2},

"model.onnx",

opset_version=16,

input_names=["x", "y"],

output_names=["add", "sub"],

)

# And check its output

session = onnxruntime.InferenceSession("model.onnx")

onnx_output = session.run(["add", "sub"], {"x": np.array(10), "y": np.array(2)})

print("[ONNX] Model Outputs:", [o.name for o in session.get_outputs()])

print("[ONNX] Model Predictions:", onnx_output)

# Now, let's convert the ONNX model to TF

onnx2tf.convert(

input_onnx_file_path="model.onnx",

output_folder_path="model.tf",

copy_onnx_input_output_names_to_tflite=True,

non_verbose=True,

)

# Now, test the newer TFLite model

interpreter = Interpreter(model_path="model.tf/model_float32.tflite")

tf_lite_model = interpreter.get_signature_runner()

inputs = {

'x': np.asarray([10], dtype=np.int64),

'y': np.asarray([2], dtype=np.int64),

}

tf_lite_output = tf_lite_model(**inputs)

print("[TFLite] Model Predictions:", tf_lite_output)

```

```

[PyTorch] Model Predictions:

{

'add': 12,

'sub': 8

}

[ONNX] Model Outputs:

[

'add',

'sub'

]

[ONNX] Model Predictions:

[

array(12, dtype=int64),

array(8, dtype=int64)

]

[TFLite] Model Predictions:

{

'add': array([12]),

'sub': array([8])

}

```

Click to expand

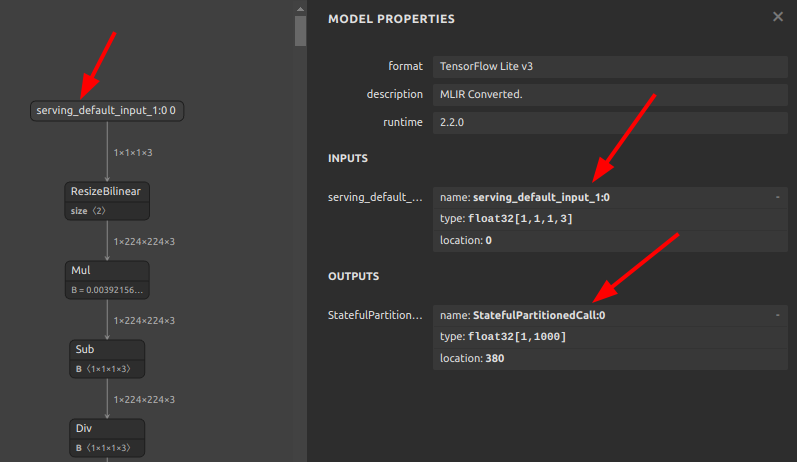

If you do not like tflite input/output names such as `serving_default_*:0` or `StatefulPartitionedCall:0`, you can rewrite them using the following tools and procedures. It can be rewritten from any name to any name, so it does not have to be `serving_default_*:0` or `StatefulPartitionedCall:0`.

https://github.com/PINTO0309/tflite-input-output-rewriter

```bash

# Install tfliteiorewriter

pip install -U tfliteiorewriter

```

- Before

```bash

tfliteiorewriter \

-i xxxx.tflite \

-r serving_default_input_1:0 aaa \

-r StatefulPartitionedCall:0 bbb

```

- After

Click to expand

If you want to embed label maps, quantization parameters, descriptions, etc. into your tflite file, you can refer to the official tutorial and try it yourself. For now, this tool does not plan to implement the ability to append metadata, as I do not want to write byte arrays to the tflite file that are not essential to its operation.

- Adding metadata to TensorFlow Lite models

https://www.tensorflow.org/lite/models/convert/metadata

7. If the accuracy of the INT8 quantized model degrades significantly

Click to expand

It is a matter of model structure. The activation function (`SiLU`/`Swish`), kernel size and stride for `Pooling`, and kernel size and stride for `Conv` should be completely revised. See: https://github.com/PINTO0309/onnx2tf/issues/269

If you want to see the difference in quantization error between `SiLU` and `ReLU`, please check this Gist by [@motokimura](https://gist.github.com/motokimura) who helped us in our research. Thanks Motoki!

Gist: [Quantization error simulation of SiLU (Swish) activation](https://gist.github.com/motokimura/1a90c0b8c5628914b99a81cd91369636)

The accuracy error rates after quantization for different activation functions are shown in the figure below. The graph plots the distribution of absolute error, so a position with a higher value on the horizontal axis indicates a larger error. The vertical axis is the number of samples. `SiLU (Swish)` produces catastrophic errors after INT8 quantization.

- e.g. YOLOX-Nano

- https://github.com/motokimura/yolox-ti-lite_tflite

- https://github.com/TexasInstruments/edgeai-yolox

|Before|After|

|:-:|:-:|

|`Swish`/`SiLU`

|`ReLU`

|

|`DepthwiseConv2D`

|`Conv2D`

|

|`MaxPool`, kernel_size=5x5,9x9,13x13

|`MaxPool`, kernel_size=3x3

|

```

### Float32 - YOLOX-Nano

(1, 52, 52, 85)

array([[[

[ 0.971787, 0.811184, 0.550566, ..., -5.962632, -7.403673, -6.735206],

[ 0.858804, 1.351296, 1.231673, ..., -6.479690, -8.277064, -7.664936],

[ 0.214827, 1.035119, 1.458006, ..., -6.291425, -8.229385, -7.761562],

...,

[ 0.450116, 1.391900, 1.533354, ..., -5.672194, -7.121591, -6.880231],

[ 0.593133, 2.112723, 0.968755, ..., -6.150078, -7.370633, -6.874294],

[ 0.088263, 1.985220, 0.619998, ..., -5.507928, -6.914980, -6.234259]]]]),

### INT8 - YOLOX-Nano

(1, 52, 52, 85)

array([[[

[ 0.941908, 0.770652, 0.513768, ..., -5.993958, -7.449634, -6.850238],

[ 0.856280, 1.284420, 1.198792, ..., -6.507727, -8.391542, -7.792146],

[ 0.256884, 0.941908, 1.455676, ..., -6.336471, -8.305914, -7.877774],

...,

[ 0.342512, 1.370048, 1.541304, ..., -5.737075, -7.192750, -7.107122],

[ 0.513768, 2.226327, 1.027536, ..., -6.165215, -7.449634, -7.021494],

[ 0.085628, 2.055072, 0.685024, ..., -5.480191, -7.021494, -6.422099]]]]),

```

- Other recommended replacement OP

|Before|After|

|:-:|:-:|

|`HardSwish`

|`ReLU`

|

|`ReLU6`

Paper: [A Quantization-Friendly Separable Convolution for MobileNets](https://arxiv.org/pdf/1803.08607.pdf) https://arxiv.org/pdf/1803.08607.pdf|`ReLU`

|

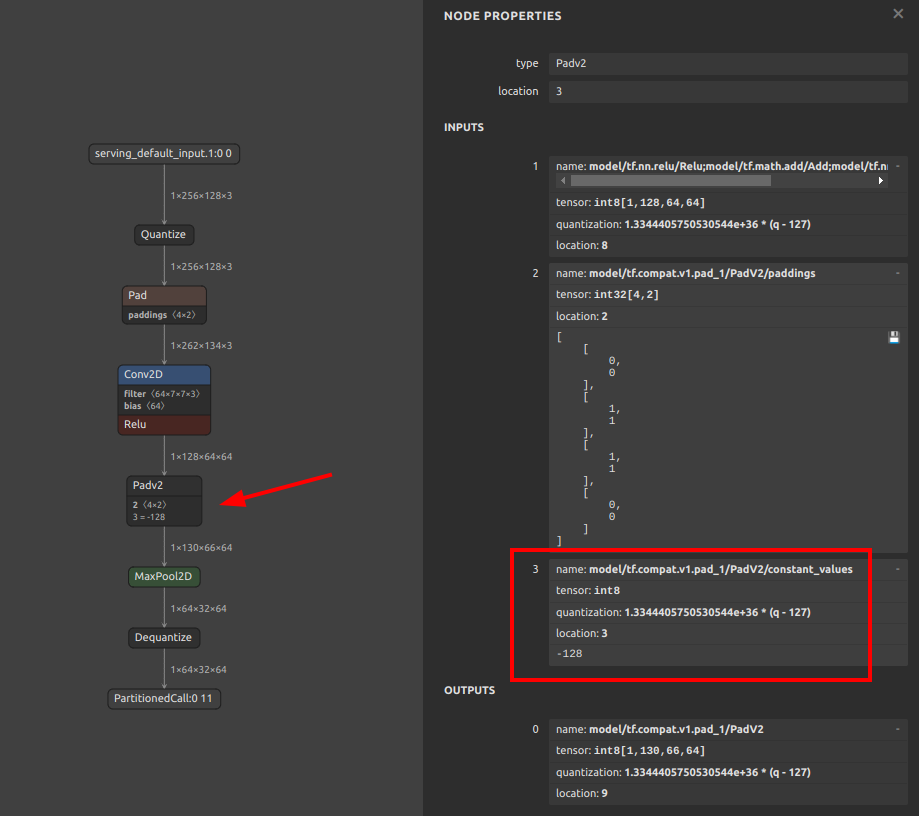

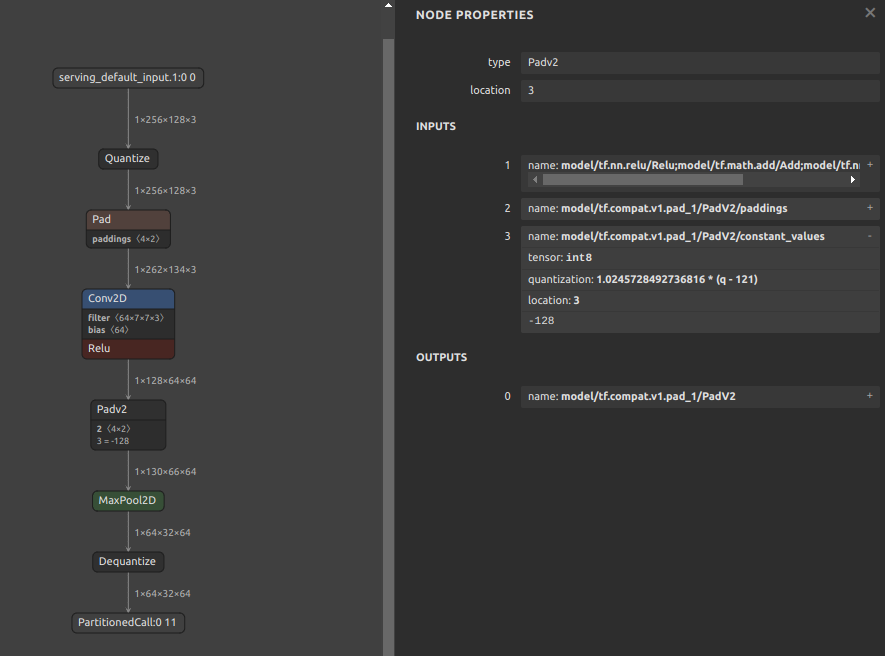

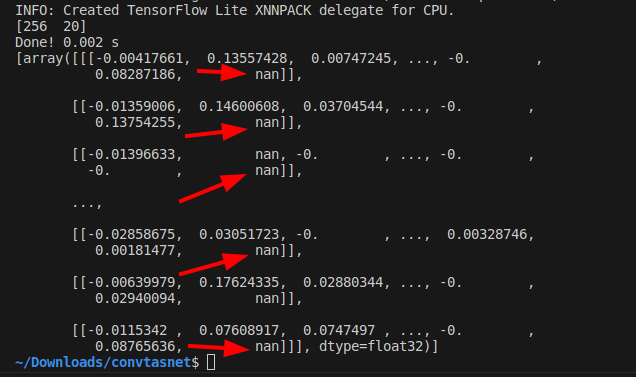

- Quantization range collapse due to non-zero constant padding

If padding is performed with a constant other than zero, the padding value may destroy the quantization range of the input tensor. For example, the pattern is shown in the figure below. The `MaxPool2D` is done after padding the 4 sides of the input tensor with the minimum value of Float32. It seems that if INT8 quantization is performed with this structure, the quantization range is determined by `MaxPool2D` during quantization, including the values padded to the tensor. See: [#444](https://github.com/PINTO0309/onnx2tf/issues/444)

Therefore, the following two similar examples are equally likely to result in divergent output values for the model after INT8 quantization, with all output values being Nan or zero.

1. Pattern with fixed value `-255.0` padded on 4 sides of tensor

2. Pattern with fixed value `-128.0` padded on 4 sides of tensor

8. Calibration data creation for INT8 quantization

Click to expand

Calibration data (.npy) for INT8 quantization (`-cind`) is generated as follows. This is a sample when the data used for training is image data. See: https://github.com/PINTO0309/onnx2tf/issues/222

https://www.tensorflow.org/lite/performance/post_training_quantization

```python

import cv2

import glob

import numpy as np

# Not used during data generation ################################

# You will need to do the calculations yourself using the test data

MEAN = np.asarray([[[[0.485, 0.456, 0.406]]]], dtype=np.float32) # [1,1,1,3]

STD = np.asarray([[[[0.229, 0.224, 0.225]]]], dtype=np.float32) # [1,1,1,3]

# Not used during data generation ################################

files = glob.glob("data/*.png")

img_datas = []

for idx, file in enumerate(files):

bgr_img = cv2.imread(file)

rgb_img = cv2.cvtColor(bgr_img, cv2.COLOR_BGR2RGB)

resized_img = cv2.resize(rgb_img, dsize=(200,112))

extend_batch_size_img = resized_img[np.newaxis, :]

normalized_img = extend_batch_size_img / 255.0 # 0.0 - 1.0

print(

f'{str(idx+1).zfill(2)}. extend_batch_size_img.shape: {extend_batch_size_img.shape}'

) # [1,112,200,3]

img_datas.append(extend_batch_size_img)

calib_datas = np.vstack(img_datas)

print(f'calib_datas.shape: {calib_datas.shape}') # [10,112,200,3]

np.save(file='data/calibdata.npy', arr=calib_datas)

loaded_data = np.load('data/calibdata.npy')

print(f'loaded_data.shape: {loaded_data.shape}') # [10,112,200,3]

"""

-cind INPUT_NAME NUMPY_FILE_PATH MEAN STD

int8_calib_datas = (loaded_data - MEAN) / STD # -1.0 - 1.0

e.g. How to specify calibration data in CLI or Script respectively.

1. CLI

-cind "pc_dep" "data/calibdata.npy" "[[[[0.485,0.456,0.406]]]]" "[[[[0.229,0.224,0.225]]]]"

-cind "feat" "data/calibdata2.npy" "[[[[0.123,...,0.321]]]]" "[[[[0.112,...,0.451]]]]"

2. Script

custom_input_op_name_np_data_path=[

["pc_dep", "data/calibdata.npy", [[[[0.485,0.456,0.406]]]], [[[[0.229,0.224,0.225]]]]],

["feat", "data/calibdata2.npy", [[[[0.123,...,0.321]]]], [[[[0.112,...,0.451]]]],

]

"""

```

Click to expand

If you do not need to perform INT8 quantization with this tool alone, the following method is the easiest.

The `-osd` option will output a `saved_model.pb` in the `saved_model` folder with the full size required for quantization. That is, a default signature named `serving_default` is embedded in `.pb`. The `-b` option is used to convert the batch size by rewriting it as a static integer.

**Note: INT8 TFLite generated by following this procedure as is will result in a model with significantly degraded accuracy. This tutorial only demonstrates the INT8 quantization procedure; if you wish to correct for accuracy, please refer to [Parameter replacement](#parameter-replacement) to correct for transposition errors in the operation.**

```bash

# Ref: https://github.com/onnx/models/tree/main/text/machine_comprehension/bert-squad

wget https://s3.ap-northeast-2.wasabisys.com/temp-models/onnx2tf_248/bertsquad-12.onnx

onnx2tf -i bertsquad-12.onnx -b 1 -osd -cotof

```

Use the `saved_model_cli` command to check the `saved_model` signature. INT8 quantization calibration using signatures allows correct control of the input order of data for calibration. Therefore, calibration with signatures is recommended for INT8 quantization of models with multiple inputs.

```bash

saved_model_cli show --dir saved_model/ --tag_set serve --signature_def serving_default

The given SavedModel SignatureDef contains the following input(s):

inputs['input_ids_0'] tensor_info:

dtype: DT_INT64

shape: (1, 256)

name: serving_default_input_ids_0:0

inputs['input_mask_0'] tensor_info:

dtype: DT_INT64

shape: (1, 256)

name: serving_default_input_mask_0:0

inputs['segment_ids_0'] tensor_info:

dtype: DT_INT64

shape: (1, 256)

name: serving_default_segment_ids_0:0

inputs['unique_ids_raw_output___9_0'] tensor_info:

dtype: DT_INT64

shape: (1)